Research Experience

InfoGraph - Generative Graph-based and Style-aware Infographics

Since June 2025, I have been working as a Research Assistant with Shishi Xiao, a Ph.D. student in the Brown Visual Computing Lab. Our research explores how visual representations—such as data charts, infographics, and logos—can be designed to communicate complex ideas more effectively. We aim to empower people with intelligent tools that bridge design and computation, enabling more expressive and accessible forms of visual communication.

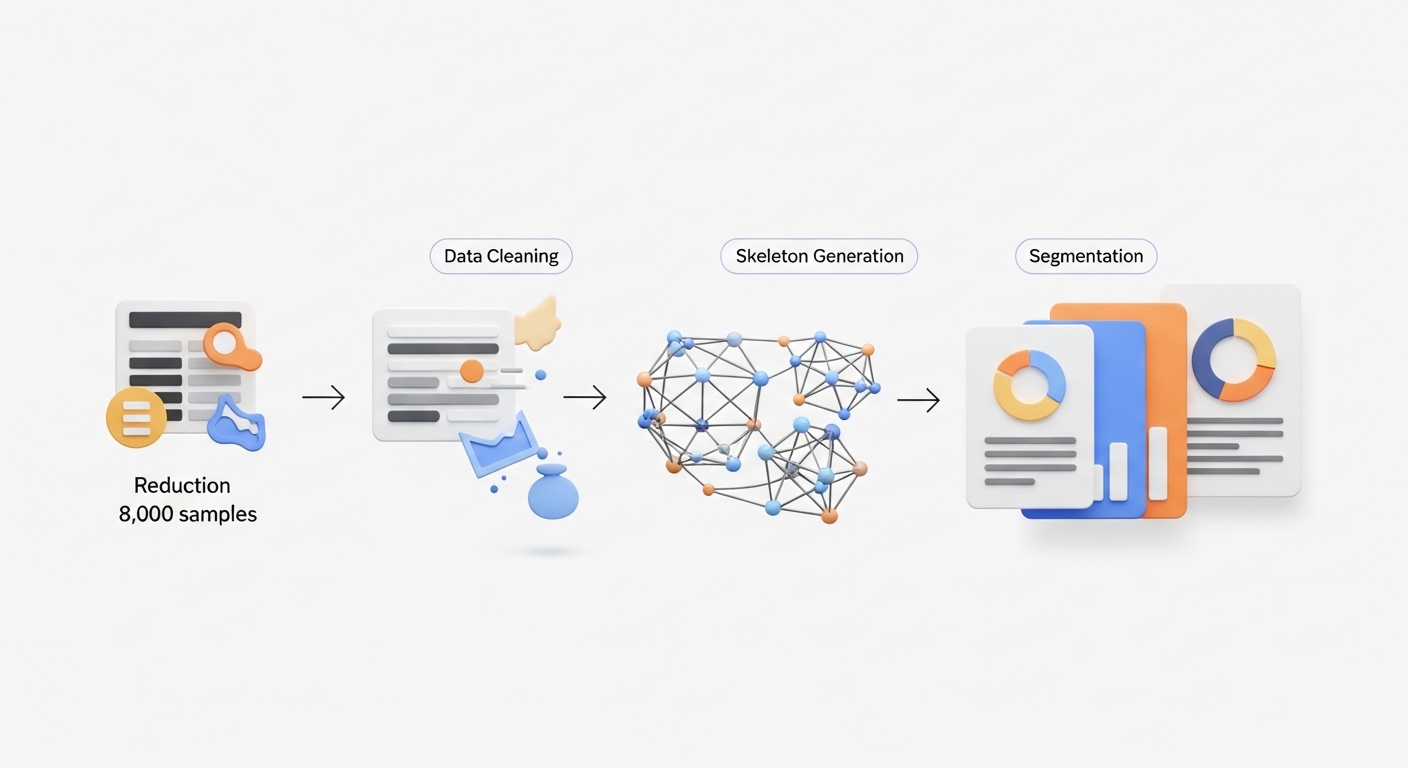

My work involves developing a data-to-visualization pipeline that constructs infographics from structured data through a skeleton-based generation framework. I also design evaluation metrics and benchmarks to systematically assess infographic quality and interpretability, and implement baseline models to compare and validate our approach.

This research aims to bridge computational design and visual communication, enabling AI-assisted systems that help people communicate complex ideas more clearly and creatively.

My work centers on infographic generation, beginning with data construction and cleaning to establish a high-quality foundation for modeling. I refined the dataset from over 37,000+ entries to about 11,500+ carefully curated samples, ensuring both diversity and structural consistency. I then developed skeleton-based generation pipelines that produce meaningful variations of infographics by adjusting node and connection patterns.

To support detailed analysis, I also applied the Segmentation Anything Model (SAM) to segment infographic elements, enabling finer-grained understanding and improved training.

I developed a comprehensive evaluation framework to assess the quality and interpretability of generated infographics. This involved defining quantitative metrics that measure visual coherence, structural accuracy, and semantic clarity. I also built a benchmark dataset for systematic comparison across models, ensuring consistency and fairness in evaluation. These metrics guide both model improvement and the assessment of human–AI collaboration in visual design.

I conducted a comprehensive review of related work, analyzing existing methods, contributions, and results to establish a foundation for comparative study. This included researching unfamiliar concepts and iteratively refining documentation to ensure accuracy and completeness.

Additionally, I implemented and ran baseline experiments, including the Style-aligned model, to evaluate and compare representative approaches from prior studies. Performance data were collected and organized to support benchmarking and guide further model development.